Interpretability of Machine Learning Methods in Credit Risk Measurement

Methods for explainability of ML models in the regulatory context of credit risk measurement – from SHAP to XAI frameworks.

Authors: Prof. Dr. Dirk Schieborn, Prof. Dr. Volker Reichenberger

Journal: Zeitschrift für das gesamte Kreditwesen

Year of publication: 2021

Abstract

Machine learning methods often deliver better predictive accuracy in credit risk measurement than classical statistical models – but their internal workings are harder to comprehend. This presents banks with a fundamental challenge: supervisory authorities expect rating systems to be explainable and validatable.

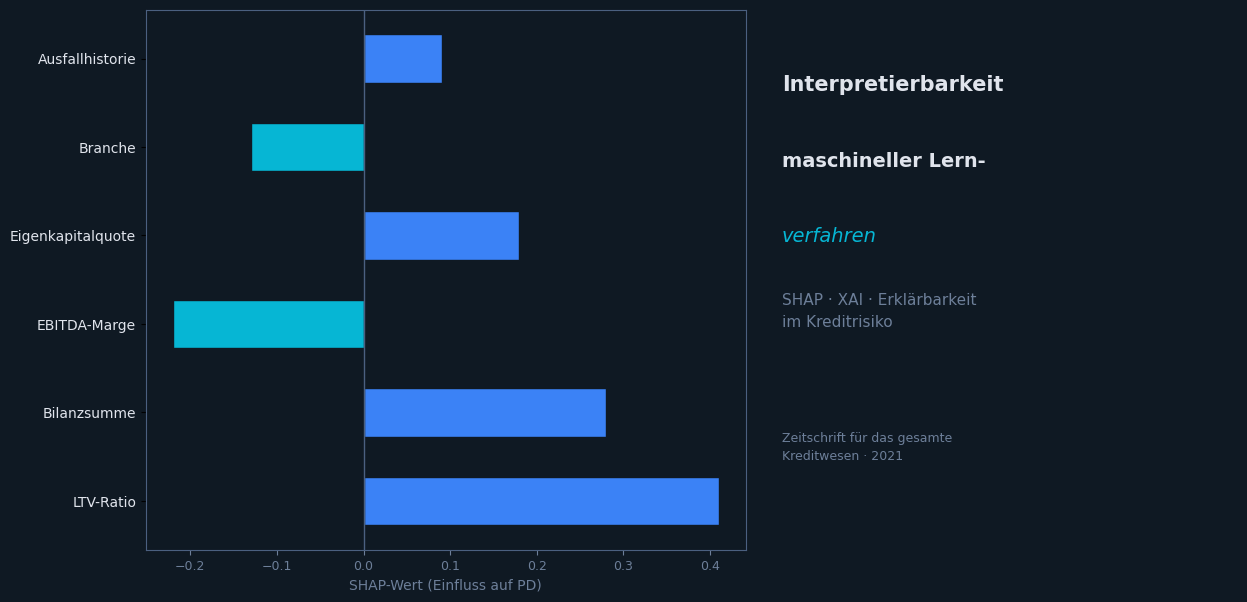

The article systematises the common approaches to interpretability (Explainable AI / XAI) and evaluates them with regard to their practical applicability for IRBA rating systems. The focus is on:

- Model-based interpretability: Decision trees, linear models with regularisation

- Post-hoc explainability: SHAP (SHapley Additive exPlanations), LIME

- Regulatory requirements: EBA guidelines, MaRisk, CRR documentation obligations

Conclusion

A carefully chosen combination of high-performing ML methods and suitable explainability techniques enables banks to harness the potential of artificial intelligence in risk management – without compromising regulatory requirements.